Estimating (LLM) bot traffic on my website

24 Jan 2026TL;DR: I configured local log analytics reporting on my site with

goaccessand pforret/goax, a bash wrapper script, to analyse regular, SEO and LLM bot traffic. I then also extended myfail2banconfiguration to ban vulnerability scanning bots.

toolstud.io

I have a mildly popular website of web calculators and converters, that sees a decent amount of traffic per month. But just how much of that traffic is OpenAI/ANthropic/Google/Meta/… scraping my content? How can I get a view on that, because the bots don’t show up on my SimpleAnalytics dashboard?

Bot traffic

When we talk about bot traffic, we mean automated HTTP requests — not humans clicking around, but scripts and crawlers hitting your server. They usually identify themselves via the User-Agent header, though some lie or omit it entirely.

- The classic bots are SEO crawlers like Googlebot, Bingbot and DuckDuckBot. They index your content for search results, generally play nice, and respect your

robots.txt. You want these. - Then there’s the newer wave of LLM bots — GPTBot (OpenAI), Claude-Web (Anthropic), CCBot (Common Crawl), Bytespider (ByteDance). These scrape content for AI training data or RAG retrieval systems. This category is growing fast, and whether they respect

robots.txtvaries. - Finally, you have vulnerability scanners probing for exposed

.envfiles,/wp-adminendpoints, SQL injection paths, and other low-hanging fruit. Some are legitimate security researchers, many are not.

If you’re using an external analytics service like SimpleAnalytics/Google Analytics, you’re only seeing human/browser visitors. These services work by injecting a JavaScript snippet into your pages. Bots don’t execute JavaScript — they fetch the raw HTML and move on. Your analytics script never runs, so these requests are invisible. To see the full picture, you need to use the server-side logs. Your Apache or Nginx access logs record every HTTP request, regardless of whether JavaScript was executed. That’s where the bots live.

GoAccess

I quickly figured out that the best program to use for this would be GoAccess: a web log analyzer that can generate static HTML reports. It parses Apache, Nginx, and other common log formats out of the box.

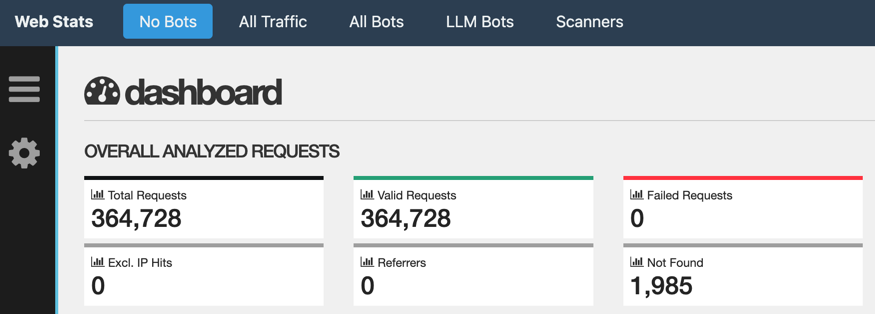

It would have been fairly easy to just run a daily goaccess /var/log/nginx/[logfile] -o [webpage] --log-format=COMBINED job, but I created a pforret/goax bash wrapper to do something more sophisticated:

- first

catandgunzipall relevant log files into one big file. - run the access report not just on all of it, but also for subselections of the total data: only the bots, only the LLM bots, everything except the bots.

- put those reports in one special folder withing the website, with an index.html frame around to easily switch between them.

- help with putting password protection on that special folder

- delete the temp joined log file

This is scheduled as a daily job in Laravel Forge

Results

So how much of my traffic is bot traffic?

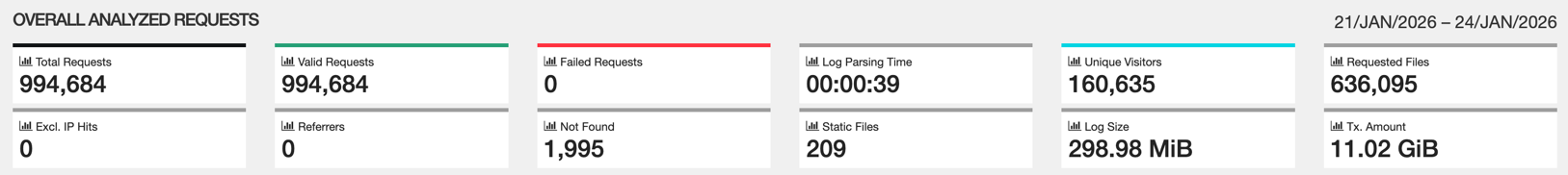

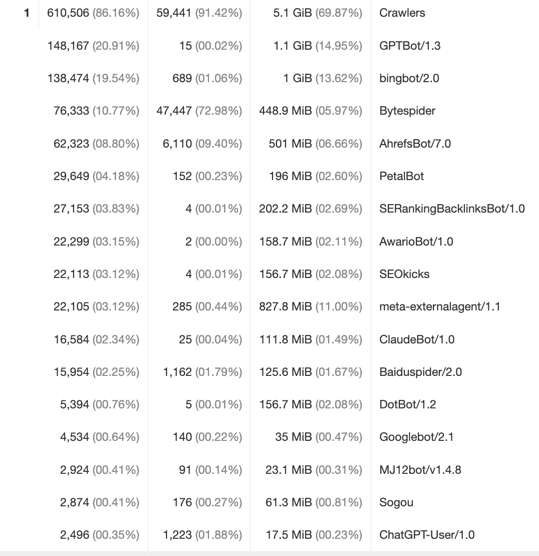

- this is my overall traffic: 994K requests, from 160K visitors.

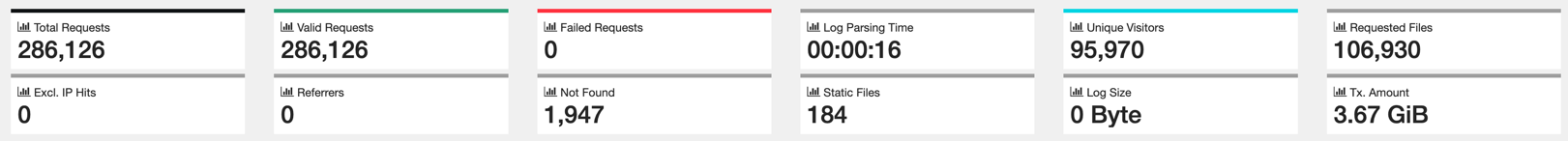

- this is how much of those are ‘normal’ users: 286K requests (30%), from 96K visitors (60%)

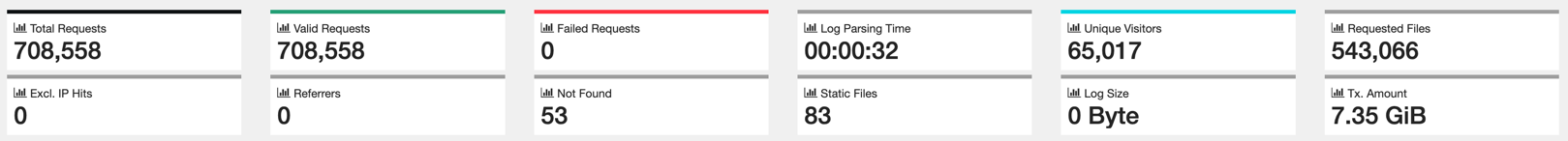

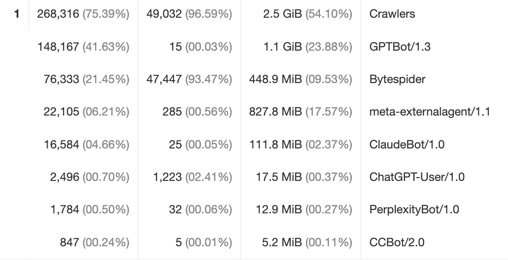

- if we look at all bots (SEO/LLM/…), we see it’s the remainder: 708K requests (70%) from 65K visitors (40%)

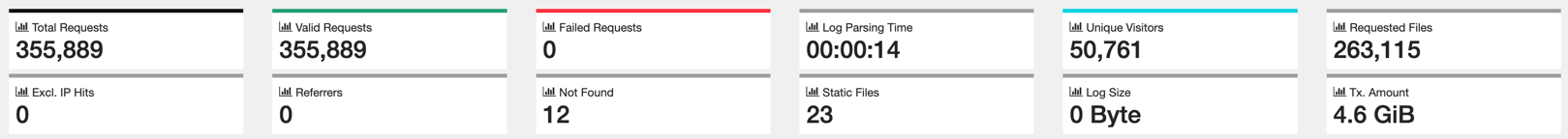

- if we zoom in on only the LLM bots: 335K requests (35%) from 50K visitors (30%)

So these LLM bots, who are they? It’s mainly GPTBot (OpenAI), Bytespider (Bytedance/TikTok) and then a bit of Meta/Facebook and ClaudeBot (Anthropic). The rest is de minimis.

Even compared to the ‘classic’ search bots, ChatGPT is very active.

fail2ban

While checking the results, I saw a lot of vulnerability scanning activity, looking for URls like /wp-admin/... and /wp-login.php (WordPress). I knew I already had fail2ban running on that server, which detects suspect traffic and then blocks the responsible IP addresses for N minutes. However, it was only active for SSH vulnerability scans. With some help of Claude Code, I set up a new rule to include those scanners.

[Definition]

failregex = ^<HOST> - - \[.*\] "(GET|POST|HEAD) .*(/wp-login\.php|/xmlrpc\.php|/admin\.php|/wp-admin|/\.env|/\.git).*

ignoreregex =